Key Takeaways

- Originality.AI ranked first among 12 detectors in the RAID benchmark, the largest independent AI detection study to date, which tested more than 6 million text records across 11 LLMs and 11 adversarial attack types.

- Its Lite model claims 99% accuracy with a 0.5% false positive rate; the Turbo model claims 99%+ accuracy and catches up to 97% of AI-humanized content.

- The detector uses a custom Transformer-based architecture trained with an ELECTRA-style generator–discriminator method on 160 GB of text data and millions of labeled samples.

- Independent academic studies place its real-world accuracy between 83% and 98%, depending on the content type and testing conditions.

- Beyond AI detection, the platform bundles plagiarism checking, real-time fact verification, readability scoring, and sentence-level AI-probability highlighting.

- Known limitation: the tool can be aggressive, sometimes flagging polished or formulaic human writing as AI-generated.

Originality.AI sits at the top of nearly every independent AI detection comparison published in the past two years. In the RAID benchmark — a collaborative study by researchers at the University of Pennsylvania, University College London, King’s College London, and Carnegie Mellon University — the tool outperformed 11 other detectors across more than 6 million text records. It ranked first in 9 out of 11 adversarial attack categories and first in 5 of 8 content domains, according to the study’s results.

What gives Originality.AI this edge is a combination of architecture choices, aggressive retraining cycles, and a product design tailored to the people who actually buy AI content at scale: SEO teams, publishers, and content agencies. Rather than offering a simple text box that returns a percentage, the platform delivers sentence-level analysis, full-website scanning, plagiarism detection, and fact-checking in a single interface. That practical focus, paired with detection accuracy that holds up under adversarial conditions, is why it keeps surfacing as the industry reference point.

How the Detection Engine Works Under the Hood

Originality.AI’s engineering team has disclosed several details about the model architecture, making it one of the more transparent commercial detectors. The system is built on a custom Transformer-based language model — inspired by BERT’s bidirectional approach but not a direct copy of it. The team pre-trained a new language model from scratch on 160 GB of text data, using an architecture they describe as entirely new yet still rooted in the Transformer framework.

The training method borrows heavily from ELECTRA, a technique developed at Google Research. ELECTRA works by training two models in tandem: a generator that produces plausible replacement tokens, and a discriminator that learns to tell real tokens from fakes. After training, the generator is discarded and the discriminator becomes the working model. This is more efficient than standard masked language modeling (the approach BERT uses) because the discriminator learns from every token in the input, not just the 15% that gets masked.

Originality.AI applied this generator–discriminator method, then fine-tuned the resulting model on a dataset built to millions of labeled samples. The human-written portion was sourced from a wide range of real-world text, cleaned and processed.

The AI-generated portion was produced using multiple large language models and a mix of sampling strategies — temperature scaling, Top-K truncation, and nucleus sampling — to ensure diversity. The team also fine-tuned the generation models on their human text dataset, forcing the AI output to closely mimic natural language. The goal: push the decision boundary between “human” and “AI” as close to real-world conditions as possible.

Why a Discriminative Architecture Beats a Generative One

The Originality.AI team concluded during testing that a discriminative model with a bidirectional architecture (one that reads text in both directions) outperforms generative models of equivalent size for detection tasks. Generative models predict the next word in a sequence and then compare that prediction against what was actually written. Discriminative models instead classify entire input sequences. The bidirectional setup gives the model richer contextual information at each token position, making it harder for evasion techniques — paraphrasing, synonym swapping, sentence restructuring — to fool the detector.

The team also found that larger models produce more accurate and robust predictions, but they balanced size against response time. The deployed model is large enough to maintain high accuracy while still returning results quickly, thanks to what the company describes as modern deployment techniques.

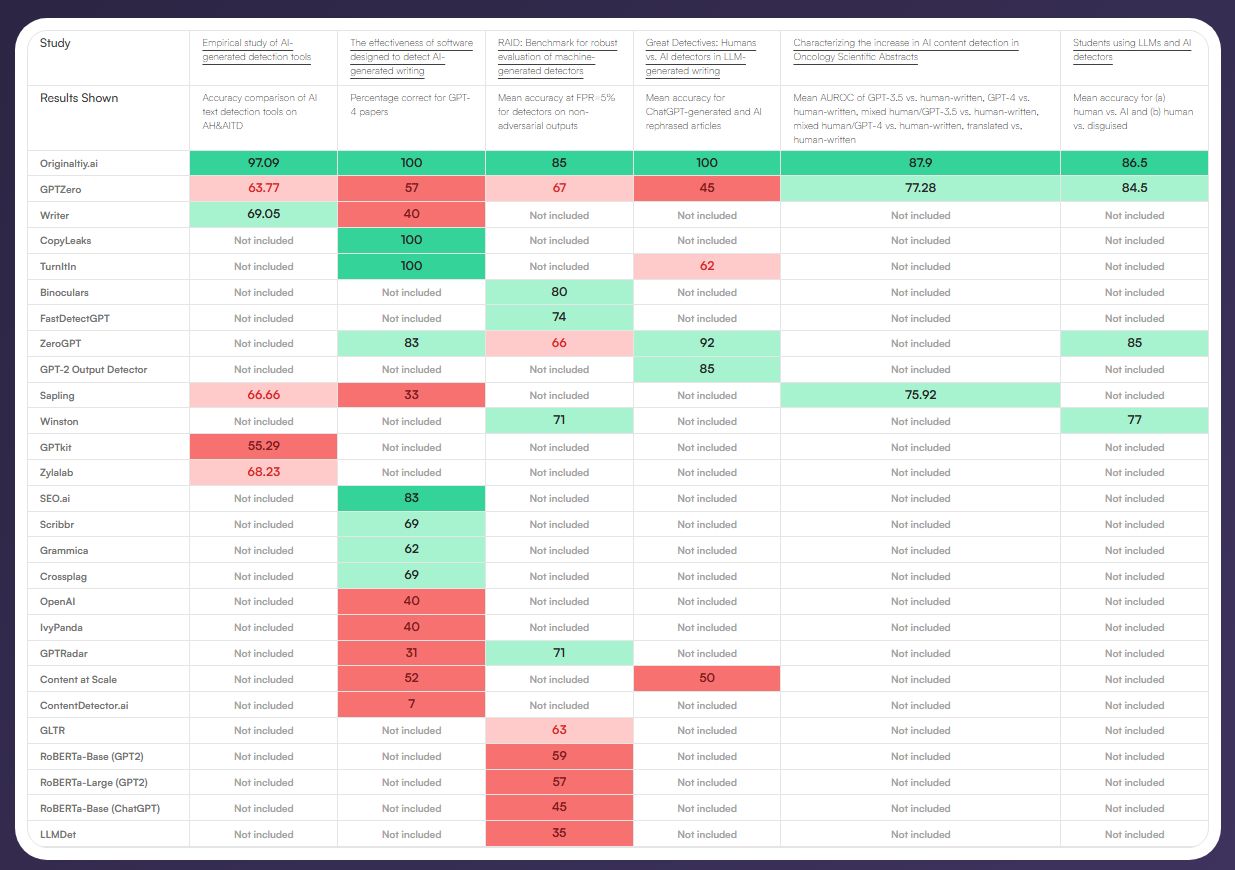

Benchmark results illustrating how different AI detectors compare against each other. Image credit: Originality.ai

RAID Benchmark: The Numbers That Built the Reputation

The RAID study (Robust AI Detection) remains the most comprehensive independent evaluation of AI content detectors completed to date. Published by a cross-institutional research team and standardized at a 5% false positive rate, the study tested each detector against 11 different text-generating LLMs and 11 types of adversarial attacks.

| Metric | Originality.AI Result | Context |

|---|---|---|

| Overall ranking | 1st of 12 detectors | Highest accuracy at 5% FPR threshold |

| Adversarial attack categories (1st place) | 9 of 11 | Including paraphrasing, humanizing, and style transfer |

| Content domains (1st place) | 5 of 8 | Tested across news, academic, creative, and other domains |

| Paraphrased content accuracy | 96.7% | Industry average for paraphrased detection is roughly 59% |

| ChatGPT-generated text accuracy | 98.2% | Per independent analysis of RAID data |

The 96.7% accuracy on paraphrased content is particularly notable. Paraphrasing and AI-humanizer tools are the most common evasion techniques. Most detectors see sharp accuracy drops when text has been run through these tools. Originality.AI resists this because its training data specifically includes paraphrased and humanized AI text generated through multiple models and sampling techniques.

Beyond RAID: What Other Studies Found

Several independent studies published in 2025 and early 2026 reinforce the RAID findings, though they also reveal that real-world accuracy varies with content type and test methodology.

| Study / Source | Accuracy Found | False Positive Rate | Notes |

|---|---|---|---|

| Journal of AI, Humanities, and New Ethics (March 2025) | 96% overall | 8% | Ranked Originality.AI as the best model across peer-reviewed studies |

| Arabic articles study (Arxiv, Nov 2025) | 96% | Lowest among commercial tools | Tested 14 detectors across 16,000+ samples |

| Arizona State University (STEM writing) | 98% | Low | Correctly identified 49/50 human essays and 48/49 AI submissions |

| PubMed Central (medical texts) | 97% | Not reported | Tested on GPT-3.5, GPT-4, and human-written medical documents |

| CyberNews (2026 evaluation) | 92% | 5.7% | Broader content types, real-world conditions |

The gap between Originality.AI’s own claims (99% Lite, 99%+ Turbo) and independent results (83%–98%) comes down to testing conditions. Internal tests use carefully controlled datasets; independent studies introduce messier, more varied content. This is normal across all AI detection tools. The important point: even at the lower end of independent measurements, Originality.AI consistently finishes first or second among commercial detectors.

Three Detection Models for Different Risk Tolerances

Originality.AI does not force all users into a single sensitivity setting. Instead, it offers three distinct detection models, each calibrated for a different use case.

The Lite model is designed for high-volume screening where minimizing false positives matters most. It claims 99% accuracy with a 0.5% false positive rate. Editorial teams that allow writers to use tools like Grammarly for grammar and clarity tend to prefer Lite, since it is less likely to flag AI-assisted editing as full AI generation.

The Turbo model is the strictest option. It claims 99%+ accuracy and catches up to 97% of AI-humanized content, but carries a higher false positive rate of 1.5%. Organizations with zero-tolerance AI policies — academic publishers, for instance — use Turbo when they prefer to over-flag rather than under-detect.

The Academic model, released in September 2025, targets educational settings specifically. It claims 99%+ accuracy with a false positive rate under 1%, tuned to handle the particular patterns of student writing.

A Multi Language model extends detection to 30 languages, reporting 97.81% accuracy with a false negative rate of 1.99%.

Full-Suite Content Integrity: More Than Just Detection

Part of Originality.AI’s hold on the market comes from bundling several content verification tools into one platform. Competing detectors tend to do one thing — detect AI text. Originality.AI stacks additional capabilities on top.

Sentence-level AI highlighting is the most distinctive feature. Rather than returning a single percentage score for an entire document, the tool assigns an AI probability to each sentence and displays the result using color-coded highlighting. Sentences likely written by a human appear in green; sentences flagged as AI-generated appear in red gradients. This lets editors see exactly which passages triggered the detector and make targeted revisions.

Website-wide scanning allows SEO teams and publishers to crawl entire domains and check every page for AI content, rather than pasting text into a box one article at a time. For agencies managing dozens or hundreds of client sites, this turns detection from a manual spot-check into a scalable audit process.

Plagiarism detection runs alongside the AI scan. Originality.AI claims to outperform established plagiarism checkers like Copyscape and Turnitin at catching patchwork and paraphrased plagiarism — the kind that simple word-matching algorithms miss.

Fact-checking adds a layer of content verification that addresses one of the core risks of AI-generated text: factual errors. The tool attempts to verify claims in real time. Independent testing of this feature found approximately 72% accuracy in identifying factual errors — useful as a first pass, though not reliable enough to replace human fact-checking.

Readability and grammar analysis round out the suite, giving publishers a single dashboard for content quality assessment.

The False Positive Problem: Where Originality.AI Gets It Wrong

No AI detector achieves 100% accuracy, and Originality.AI’s aggressive detection posture means it sometimes flags human-written content as AI-generated. This is the tool’s most-discussed limitation.

Andrew Holland, an SEO strategist who tested the tool extensively for his AI Growth Lab newsletter, documented several revealing patterns. When he ran content through Originality.AI that included Grammarly-suggested edits, the detector flagged those sections as partially AI-generated. Originality.AI’s own documentation acknowledges this: when users accept Grammarly’s “Rephrase” or “Rewrite” suggestions, those passages are likely to trigger AI detection. Spelling corrections alone do not cause flags.

Holland also found that text length matters significantly. When he analyzed 500 words from Donald Trump’s 2025 inaugural address, the tool reported it as 99% likely AI-generated. When he fed it 2,500 words from the same speech, the detection swung to the correct result. Short text samples give the model less statistical information to work with, leading to less reliable outputs. Originality.AI’s own team recommends a minimum of 100 words and notes that the model reaches acceptable reliability at 50 tokens or longer.

Highly polished, formal, or formulaic human writing is another trigger. Content that has been through multiple rounds of professional editing — the kind of clean, structured prose that characterizes good publishing — can look statistically similar to AI output. The detector reads patterns like low burstiness (uniformly structured sentences), predictable punctuation, and high grammatical precision as potential AI signals. Skilled writers and professionally edited text sometimes trip these same wires.

Holland found he could take AI-generated content and pass detection by removing em dashes and adding conversational phrases. In one test, removing a single em dash shifted the score from 66% likely AI to 99% likely human. Em dashes are heavily used by AI writing tools — but they are also standard punctuation for many professional writers and editors.

How Originality.AI Keeps Pace With New AI Models

The AI generation landscape moves fast. In 2025 alone, major releases included GPT-5, DeepSeek, Claude 4 Sonnet, and Claude 4 Opus. Each new model generates text with subtly different statistical signatures, and a detector trained only on older models will miss these patterns.

Originality.AI addresses this with a continuous retraining pipeline. When a new LLM launches, the team generates text using that model across multiple sampling strategies, adds it to the training dataset, and retrains the detection model. The company claims to update its models faster than most competitors — a claim supported by the fact that its detector currently covers GPT-5, Claude 4 Opus, Claude 4 Sonnet, GPT-4.1, ChatGPT-4o, Gemini 2.5, Claude 3.7, and DeepSeek V3, among others.

This retraining loop is not optional. The Originality.AI team has stated that each time a new text-generation model is released, the detection model must be retested and evaluated. If the existing detector does not perform adequately against the new model’s output, retraining follows. The diversified training data — generated from many models using varied sampling techniques — provides a degree of forward compatibility, but ongoing updates remain necessary.

Detection Patterns: What the Tool Actually Measures

AI content detectors, including Originality.AI, analyze statistical patterns in text rather than identifying any kind of hidden watermark. The primary signals the model evaluates include burstiness (how sentence length and word placement vary throughout a document), word frequency distributions, punctuation predictability, lexical diversity, and syntactic variation.

Human writers tend to produce uneven text — long sentences followed by short ones, shifts in tone, occasional grammatical imperfections, colloquialisms. AI-generated text, by contrast, tends toward uniformity: balanced sentence lengths, correct but predictable punctuation, and consistent vocabulary. The detector’s job is to quantify these differences and assign a probability score.

The scoring works as a binary classification problem. The model takes text as input and outputs a probability that the text was generated by AI. If that probability exceeds a set threshold (typically 0.5), the text is classified as AI-generated. The final score presented to the user represents the model’s confidence level, not a certainty.

Who Uses Originality.AI and Why

The tool was built for web publishers, SEO agencies, and content marketers — not for academic grading or casual personal use. This focus shapes both the product features and the tolerance settings. A content agency buying 50 articles a week from freelance writers needs to verify delivery quality at scale. A publisher running a portfolio of websites needs to audit thousands of pages for AI content after Google’s repeated warnings about “scaled content abuse.” These are the core use cases Originality.AI serves, and its website scanning, batch processing, and team collaboration features are designed around them.

Academic users can access the dedicated Academic model, but several independent reviewers have cautioned that using any AI detector — including Originality.AI — as the sole basis for academic integrity decisions is risky. False positives carry real consequences in educational settings: accusations of cheating, lost scholarships, and disciplinary actions. The company itself states that an AI detection score alone should not be used for academic discipline.

The Bigger Question: Detection Versus Content Analysis

Holland’s testing led him to an argument that many in the SEO industry now echo: AI detectors are functioning less as binary “human or machine” classifiers and more as content quality analysis tools. They identify polished, well-structured writing — which may or may not be AI-generated — and they flag statistical patterns that correlate with machine output.

This does not make the tools useless. It means the output is a signal, not a verdict. A score of 65% likely AI is an invitation to ask follow-up questions: How much human editing shaped this piece? Was AI used for drafting, research, or polishing? Did a subject-matter expert review the final version? These are the questions that matter to content buyers, and a detection score gives them a starting point for the conversation.

Originality.AI appears to recognize this direction. The platform already combines detection with plagiarism checking, fact verification, and readability scoring — moving toward a comprehensive content quality dashboard rather than a single pass/fail gate. As AI-assisted writing becomes standard practice across industries, the value of these tools will increasingly depend on how well they help humans make informed decisions about content quality, rather than whether they can draw a clean line between “human” and “AI.”

If you are interested in this topic, we suggest you check our articles:

- Best AI Detection Tools of 2025: The Complete Year-End List

- The 5 Best AI Content Detectors for Identifying AI-Generated Text

Sources: Originality.ai, Andrew Holland on LinkedIn, Ampfire

Written by Alius Noreika