Key Takeaways

- Vertex AI is Google Cloud’s unified platform for building, deploying, and scaling generative AI models, machine learning models, and AI agents at enterprise scale.

- The platform is transitioning to become part of the Gemini Enterprise Agent Platform, expanding its agent-building capabilities.

- Model Garden offers access to over 200 models, including Google’s Gemini, Imagen, Veo, Anthropic’s Claude, Mistral, Meta’s Llama, and Google’s open-source Gemma.

- Vertex AI Studio supports prompt design, testing, and management using text, images, video, and code inputs.

- The platform covers the entire machine learning lifecycle: data preparation, training, evaluation, deployment, and monitoring.

- Built-in MLOps tools include Pipelines, Model Registry, Feature Store, Model Monitoring, and Experiments.

- New Google Cloud customers receive up to $300 in free credits to test the platform.

- Pricing follows pay-as-you-go rates starting at $0.0001 per 1,000 characters for text generation and $0.03 per pipeline run.

Vertex AI Defined: One Platform for AI Development

Vertex AI is Google Cloud’s open, unified platform for building, deploying, and scaling generative AI applications, machine learning models, and intelligent agents. It brings prompt engineering, model training, fine-tuning, deployment, monitoring, and governance into one connected environment, removing the need to stitch together separate tools across the AI development cycle.

The platform now operates as part of the broader Gemini Enterprise Agent Platform. This shift positions Vertex AI as the technical foundation where developers and data scientists prototype, customize, and run production AI workloads, while Gemini Enterprise serves as the destination for registering and governing the agents they create.

Who Uses Vertex AI and Why

Vertex AI targets three main audiences: developers building generative AI applications, data scientists training and tuning machine learning models, and enterprise teams deploying agents into business workflows. The appeal lies in breadth — a developer can prompt a Gemini 3 model in the morning, fine-tune a Llama variant in the afternoon, and deploy an agent built with the Agent Development Kit by the end of the day, all without leaving the platform.

GA Telesis CEO Abdol Moabery described the value this way: “The accuracy of Google Cloud’s generative AI solution and practicality of the Agent Platform gives us the confidence we needed to implement this cutting-edge technology into the heart of our business and achieve our long-term goal of a zero-minute response time.”

Model Garden: Access to 200+ AI Models

Model Garden is the catalog at the centre of Vertex AI. It hosts more than 200 models drawn from three sources: Google’s first-party models, third-party commercial partners, and open-source projects.

| Model Category | Examples | Primary Use |

|---|---|---|

| Google foundation models | Gemini 3 Flash, Gemini 3 Pro, Imagen, Veo, Lyria, Chirp | Text, image, video, audio generation; multimodal reasoning |

| Third-party partner models | Anthropic’s Claude family, Mistral AI | Managed API access to leading commercial models |

| Open-source models | Gemma, Llama | Customization, deployment flexibility |

Anthropic’s Claude models and Mistral are offered as fully managed model-as-a-service APIs, meaning customers consume them without provisioning infrastructure. Gemini 3 Pro includes Nano Banana Pro for advanced image generation and editing.

Vertex AI Studio: Prompt Design and Testing

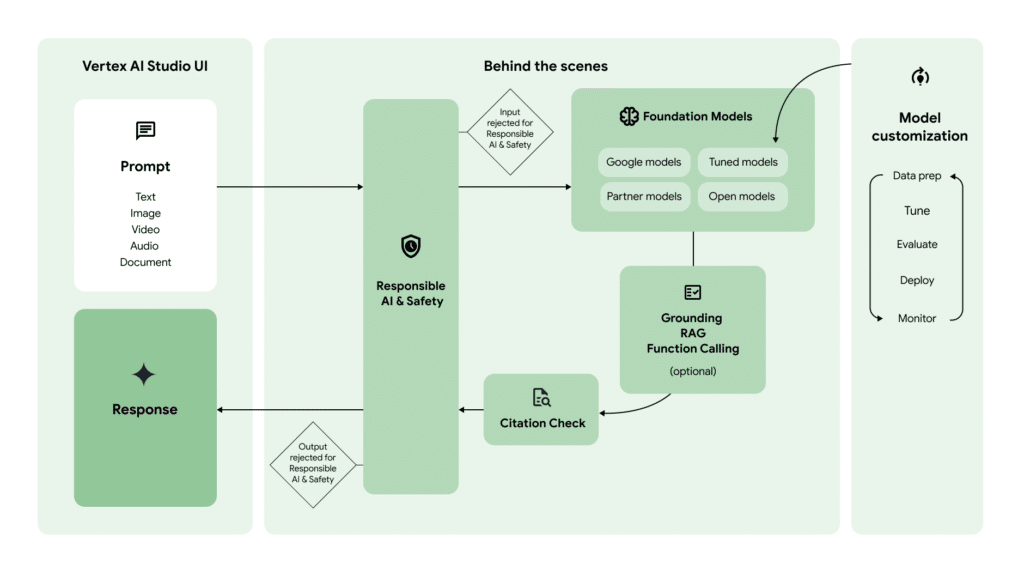

Vertex AI Studio (also referred to as Agent Studio in the newer Agent Platform branding) is where developers prototype with foundation models. It accepts text, code, image, and video inputs, supporting use cases such as extracting text from images, converting image content to JSON, generating descriptions of uploaded media, and building HTML mockups from screenshots.

The Studio also serves as the launchpad for prompt design strategies — classification, summarization, and information extraction being the most common applications. Developers can move from notebook experiments directly to API calls using a generated API key.

Building AI Agents with Vertex AI Agent Builder

Vertex AI Agent Builder is the full-stack system for creating, managing, and deploying AI agents. It revolves around two main components:

The Agent Development Kit (ADK) is an open-source framework for building and orchestrating agents. Developers write agent logic, define tools, and chain reasoning steps using the kit’s primitives.

The Vertex AI Agent Engine is the managed, serverless runtime where agents execute in production. Each agent receives an Agent Identity tied to Google Cloud’s Identity and Access Management system, providing authentication and a clear audit trail for security and compliance teams.

Agents built on Vertex AI can be promoted to the Gemini Enterprise app, where organizations register, manage, and govern them across the broader business.

Customizing Models for Enterprise Data

Out-of-the-box foundation models rarely match a specific business context. Vertex AI offers several customization paths:

Grounding connects model responses to enterprise data sources or Google Search, reducing hallucinations and tying answers to verifiable references.

Vertex AI Training supports both Supervised Fine-Tuning (SFT) and Parameter-Efficient Fine-Tuning (PEFT) for models including Gemini. PEFT is particularly useful when teams want behaviour adjustments without the cost of full retraining.

Function Calling lets models trigger external APIs, while Retrieval-Augmented Generation (RAG) pulls information from knowledge bases at inference time.

The Machine Learning Workflow on Vertex AI

For teams running classical ML and predictive workloads, Vertex AI maps tools to each stage of the lifecycle:

| Stage | Vertex AI Tool | Purpose |

|---|---|---|

| Data preparation | Workbench notebooks, BigQuery integration, Dataproc Serverless Spark | Exploratory analysis, large-scale data processing |

| Model training | AutoML, Custom Training, Ray on Vertex AI, Vizier | Code-free or full-control training; hyperparameter tuning |

| Evaluation | Vertex AI Experiments, Model Evaluation | Compare runs, assess performance |

| Deployment | Model Registry, online and batch inference | Version, deploy, and serve models |

| Monitoring | Model Monitoring | Detect training-serving skew and inference drift |

Two distinct training modes are available: serverless training, which runs custom code on-demand in a fully managed environment, and training clusters, which provide dedicated, reserved accelerator capacity for large jobs that cannot tolerate scheduling delays.

MLOps Capabilities

The MLOps toolkit is what separates Vertex AI from a collection of standalone notebooks. Pipelines orchestrate workflows as reusable, automated sequences. Model Registry handles versioning and deployment lineage. Feature Store centralizes feature data so the same engineered inputs power both training and real-time prediction. ML Metadata tracks artifacts across runs, and Model Monitoring watches deployed models for drift over time.

Safety, Governance, and Responsible AI

Enterprise adoption requires guardrails. Vertex AI includes built-in safety filters to block harmful content. Model Armor provides runtime defence against emergent threats including prompt injection and data exfiltration attempts. The Generative AI Evaluation service offers data-driven assessment of model and agent performance, helping teams compare candidates objectively before promotion to production.

Each agent’s Identity and Access Management principal creates a clear audit trail, which matters for compliance teams managing regulated workloads.

Pricing Structure

Vertex AI follows a pay-as-you-go model. The headline rates:

| Service | Pricing |

|---|---|

| Text, chat, and code generation | Starting at $0.0001 per 1,000 characters (input or output) |

| Imagen image generation | Starting at $0.0001 per image |

| Vertex AI Pipelines | Starting at $0.03 per pipeline run |

| Custom model training | Based on machine type, region, and accelerators (contact sales) |

| Notebooks | Compute Engine and Cloud Storage rates plus management fees |

| Vector Search | Based on data size, queries per second, and node count |

New customers receive up to $300 in free credits to test Vertex AI alongside other Google Cloud products.

Industry Recognition

Google has been named a Leader in the 2025 IDC MarketScape for Worldwide GenAI Life-Cycle Foundation Model Software, a Leader in the Gartner Magic Quadrant for AI Application Development Platforms (Q4 2025), and a Leader in the Forrester Wave: AI/ML Platforms (Q3 2024).

Where Vertex AI Fits Among Cloud AI Platforms

Vertex AI competes most directly with Amazon SageMaker and Azure AI Foundry. Its differentiators are the Gemini family of native multimodal models, the breadth of Model Garden (which includes direct competitors’ models such as Claude and Llama as managed services), and tight integration with BigQuery for data warehouse-based machine learning. For teams already on Google Cloud, the platform removes most of the integration work that comes with running AI workloads on a different cloud.

Getting Started

The fastest entry point is Vertex AI Studio for prompt experiments, followed by the Model Garden to compare options. Developers building agents should start with the Agent Development Kit codelabs. Data scientists running custom training can begin with Workbench notebooks and progress to Vertex AI Pipelines once their workflow stabilizes.

The platform’s documentation lives at Google Cloud’s official site, with the Gemini Enterprise Agent Platform documentation now serving as the authoritative source as Vertex AI’s branding evolves.

If you are interested in this topic, we suggest you check our articles:

- Is Google AI Studio the Hottest GenAI Platform in 2025?

- Google’s AI Mode: Reshaping the Future of Search

Sources: Google Cloud, Google Cloud²

Written by Alius Noreika