Key Takeaways

- Prompt chaining splits complex AI requests into sequential sub-tasks, and it consistently outperforms single-prompt engineering for multi-step work, with output quality gains of roughly 20%.

- Research presented at ACL 2024 confirmed that chained refinement beats stepwise refinement within a single prompt for text summarization tasks.

- A 2025 study on intelligent assistants found prompt chaining improved dialogue accuracy by an average of 8% and significantly raised human evaluator scores for consistency and personalization.

- Prompt chaining adds latency due to multiple API calls, but the tradeoff is better accuracy, easier debugging, and greater control over each stage of output.

- Standard prompt engineering remains the faster, simpler choice for single-step tasks, creative writing, and quick queries that don’t involve multi-part reasoning.

- The two techniques are not rivals. Prompt chaining is an advanced implementation of prompt engineering, and practitioners get the best results by combining both.

The Short Answer: Chaining Wins on Complex Tasks

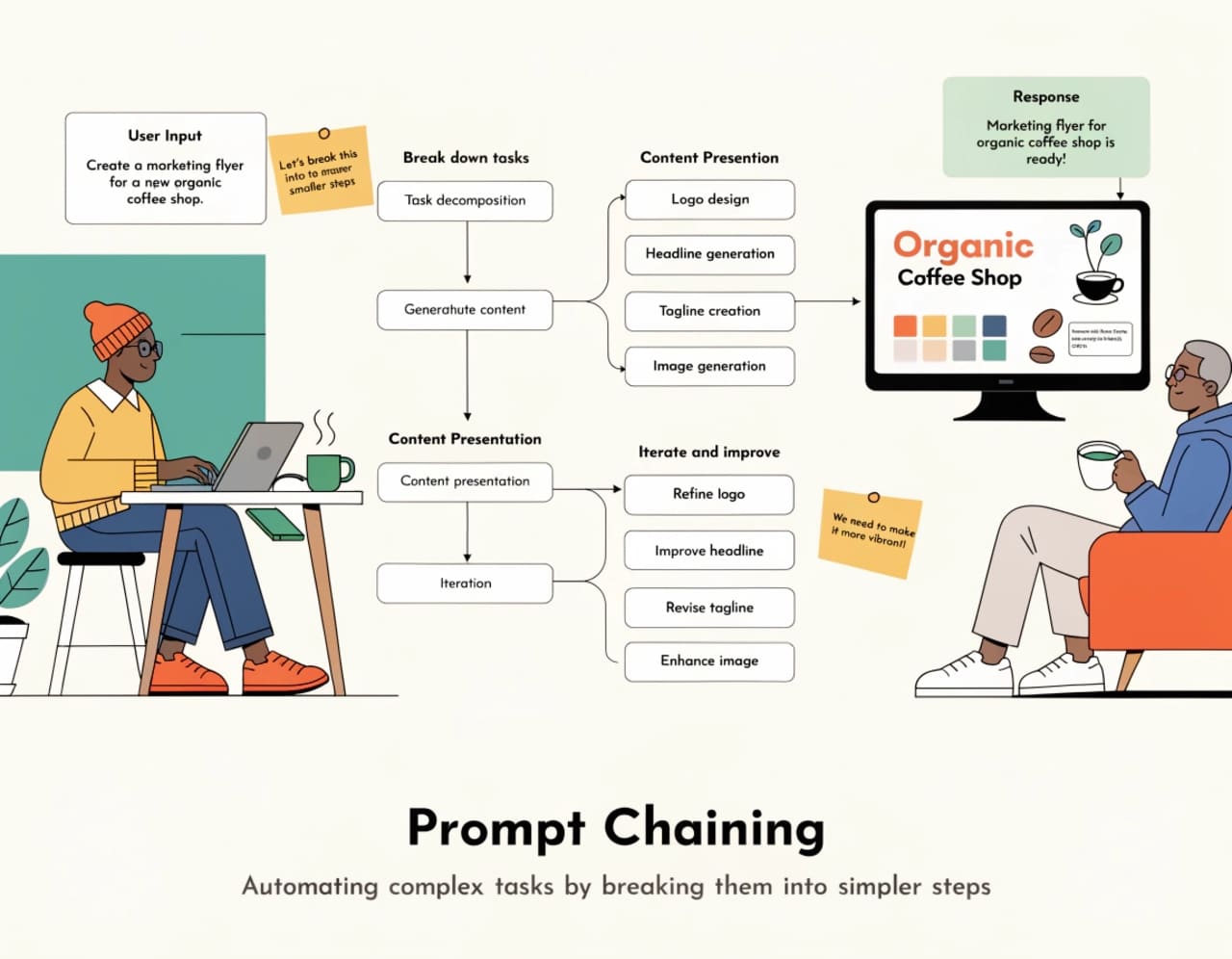

For anyone asking whether prompt chaining outperforms traditional prompt engineering, the evidence is clear: yes, for complex and multi-step work. Prompt chaining — the practice of dividing a large request into a sequence of smaller, focused prompts where each output feeds into the next — produces more accurate, more consistent, and more controllable results than trying to pack everything into a single instruction. Estimates from practitioners and early research suggest an approximate 20% improvement in output quality on tasks that involve multiple logical steps.

That said, “more effective” does not mean “always better.” For simple queries, quick creative tasks, or situations where speed matters more than precision, a well-crafted single prompt still does the job. The real question is not which technique to choose, but when to apply each one.

What Distinguishes Prompt Chaining from Standard Prompt Engineering

Prompt engineering is the broader discipline. It covers everything involved in crafting instructions for a large language model: choosing the right wording, adding context, providing examples, specifying output formats, and applying techniques like zero-shot, few-shot, or chain-of-thought prompting. A single, well-structured prompt is the default tool.

Prompt chaining is a specific technique within that discipline. Instead of writing one monolithic prompt, you decompose a task into discrete steps and run them in sequence. The output of step one becomes the input for step two, and so on — like passing a baton in a relay.

| Feature | Single-Prompt Engineering | Prompt Chaining |

|---|---|---|

| Structure | One prompt handles the entire task | Task split into sequential sub-prompts |

| Best for | Simple queries, creative tasks, quick answers | Multi-step workflows, data analysis, long-form content |

| Accuracy on complex tasks | Lower — model struggles to juggle many instructions | Higher — each step focuses on one objective |

| Debugging | Difficult to isolate where the model went wrong | Easy — you can inspect each step individually |

| Setup time | Minimal | Requires planning the chain and defining inputs/outputs |

| Latency | Single API call | Multiple API calls, higher total latency |

| Hallucination risk | Higher on complex requests | Lower — narrower scope per step reduces drift |

| Control over output | Limited — you get one shot | Granular — adjust tone, detail, and format at each stage |

IBM’s prompt engineering documentation describes prompt chaining as an “advance implementation of prompt engineering” that can outperform other approaches including zero-shot, few-shot, and fine-tuned models when applied to tasks that benefit from structured decomposition.

What the Research Says

ACL 2024: Chaining Beats Stepwise Prompting for Summarization

A paper presented at ACL 2024 Findings by Sun et al. directly compared prompt chaining against stepwise prompting (where all steps are listed in a single prompt) for text summarization. The conclusion was unambiguous: chained refinement produced better summaries than asking the model to handle all refinement steps at once within one prompt. This matches what practitioners report anecdotally — even the first draft in a chain tends to be stronger than what a complex monolithic prompt generates.

Prompt Chaining Improves Dialogue Accuracy by 8%

A study published in the proceedings of the 30th International Conference on Intelligent User Interfaces tested a prompt chaining framework for long-term recall in LLM-powered assistants. On the MultiWOZ 2.1 benchmark dataset, the framework produced an average 8% increase in Joint Goal Accuracy. Human evaluators also scored chained interactions higher across sensibleness (3.2 to 3.8 on a 5-point scale), consistency (2.9 to 3.8), and personalization (2.7 to 3.4).

Legal Information Extraction: Chaining Achieves Top F1 Scores

Research presented at the Natural Legal Language Processing Workshop 2024 (Kwak et al.) applied prompt chaining to legal information extraction tasks. Their approach — classify first, then extract — achieved the best F1 scores for both in-domain and out-of-domain datasets when compared to baselines that packed all class examples into a single prompt. The method also reduced token usage, offering a cost benefit alongside improved accuracy.

Why Chaining Produces Better Output

Three mechanisms explain why splitting tasks into steps helps LLMs perform better.

Cognitive focus per step. When a model receives a single prompt containing five different instructions — summarize, extract themes, reorganize, rewrite in a specific tone, add citations — it has to juggle all of them simultaneously. Errors compound. When each instruction runs separately, the model can devote its full attention to one objective at a time.

Iterative refinement mirrors human workflows. Writing a report in one pass is hard for humans too. Chaining follows the same logic most professionals use: draft, review, revise, polish. The model responds well to this structured feedback loop because earlier outputs become explicit, concrete inputs for the next step rather than vague intentions buried in a long prompt.

Structured handoffs reduce ambiguity. When you define clear input and output formats between steps — especially using JSON schemas or explicit templates — you minimize context bleed. Each prompt in the chain knows exactly what data it’s receiving and what it needs to produce.

A Practical Example: From Notes to Polished Draft

Here is how a typical prompt chain might look for turning raw meeting notes into a finished report:

Step 1: “Summarize these meeting notes into five key points.” Step 2: “Group these five points into two thematic sections with headers.” Step 3: “Write a 500-word report using these sections. Use a professional but conversational tone.” Step 4: “Review the report for factual consistency against the original notes. Flag any discrepancies.”

Each step produces a contained, inspectable output. If Step 2 groups the themes poorly, you fix Step 2 without touching anything else. Try isolating that kind of error in a single 200-word prompt that tried to do all four things at once.

This is the same workflow that practitioner Matt described on Medium when attempting to convert messy project notes into a proposal. After multiple failed attempts with a single prompt — where the output kept turning vague and hallucination-prone — he broke the task into summarization, theme identification, and section-by-section drafting. The result still needed editing, but the first draft quality improved dramatically.

When a Single Prompt Is the Right Choice

Not every task benefits from chaining. These situations call for standard prompt engineering:

A straightforward question with a direct answer does not need decomposition. Asking “What is the capital of France?” through a three-step chain would waste time and tokens.

Creative or open-ended prompts often work better as single interactions. If you want a brainstorm, a poem (within copyright limits), or a loose exploration of ideas, the freedom of a single prompt gives the model room to be inventive.

Speed-sensitive applications where latency matters — such as real-time chatbots — may not tolerate the overhead of multiple sequential API calls.

Tasks where the cost of additional API calls outweighs the quality improvement. Every step in a chain consumes tokens. For low-stakes content, that additional expense may not be justified.

Tools for Automating Prompt Chains

You don’t have to copy and paste between prompts manually, though that works fine for simple chains. Several platforms support automated prompt chaining:

| Tool | What It Does |

|---|---|

| LangChain | Open-source framework for building multi-step LLM workflows with retrieval, memory, and tool integration |

| Zapier / Make.com | No-code automation platforms that can sequence API calls between prompts |

| n8n | Open-source workflow automation with LLM nodes for chaining |

| Microsoft Copilot Studio | Visual builder for creating multi-step AI workflows |

| MindPal | Purpose-built tool for designing and running prompt chains |

For developers working directly with APIs, chaining can be implemented in any programming language by passing the response of one API call as the input for the next, with optional validation or transformation steps between them.

Common Mistakes When Building Prompt Chains

Skipping validation between steps. If Step 1 produces a bad summary, every downstream step inherits that error. Building in a quick review — automated or manual — after critical steps prevents cascading failures.

Over-engineering simple tasks. Some tasks genuinely need just one prompt. Adding a chain to a simple request adds complexity and latency with no quality payoff. As one Reddit user put it: “Sometimes it’s better to just write the damn thing.”

Ignoring context window limits. As chains grow longer and outputs accumulate, you can exceed the model’s context window. Structuring handoffs with concise, focused data — rather than dumping every prior output into the next prompt — keeps chains manageable.

Not defining clear inputs and outputs. Vague handoffs between steps produce vague results. Specifying formats (JSON, numbered lists, specific templates) at each stage keeps the chain tight.

The Bottom Line: Use Both, Strategically

Prompt chaining and prompt engineering are not competing methods. One is a specialization of the other. The strongest approach combines solid prompt engineering fundamentals — clear language, specific instructions, appropriate context — with chaining whenever a task has multiple distinct phases.

For anyone working regularly with LLMs, learning to identify where a task naturally breaks into steps is one of the highest-leverage skills available. The question to ask is not “How can I get the AI to do this entire job in one go?” but rather “Where in this task would I slow down or get stuck?” Those friction points are exactly where a new link in the chain belongs.

If you are interested in this topic, we suggest you check our articles:

- Prompt Engineering 2.0: System Prompts, Tools, and Measurable Quality

- Use This Simple Prompt Framework to Improve Your AI Output

- Writing Better Prompts for ChatGPT and Other AI Tools: A Key Guide

Sources: Matt Goddard on Medium, IBM, ACL Anthology, ACM Digital Library, ACL Anthology (2),

Written by Alius Noreika