Key Takeaways

- Claude Mythos Preview is a general-purpose large language model from Anthropic, released in early April 2026, that shows unusually strong skills at finding and exploiting software vulnerabilities.

- It is not publicly available. Access is gated through Project Glasswing, a programme limited to roughly 12 launch partners and over 40 additional organisations working on critical software.

- The model has a 1 million token context window, a 128,000 token maximum output, and a knowledge cutoff of December 2025.

- Pricing on Amazon Bedrock sits at $25 per million input tokens and $125 per million output tokens, after an initial $100 million credit pool is exhausted.

- Anthropic reports that Mythos identified a 27-year-old OpenBSD bug, a 17-year-old FreeBSD remote code execution flaw (CVE-2026-4747), and a 16-year-old FFmpeg vulnerability, among thousands of others.

- The UK’s AI Security Institute reached a more measured conclusion: Mythos performs strongly against poorly defended systems, but its impact on hardened, actively defended environments remains uncertain.

- Finance ministers, central bankers, and EU regulators have raised concerns about what the model could mean for the security of financial and critical digital infrastructure.

What Is Claude Mythos?

Claude Mythos is a generative AI model built by Anthropic and unveiled in early April 2026 under the name Claude Mythos Preview. Although Anthropic trained it as a general-purpose large language model, the company says the standout result is its ability to handle complex, multi-step cybersecurity work — finding vulnerabilities in real software and turning them into working exploits.

In direct terms: Mythos is the first Anthropic model where the security skills are not a side feature but the headline. Anthropic describes its abilities in code analysis, autonomous reasoning, and security research as “substantially beyond those of any model” the company has trained before, including Claude Opus 4.7. Notably, the team did not train Mythos specifically to hack — those skills emerged as a by-product of broader gains in coding and reasoning.

Because of those same skills, Anthropic has chosen not to release the model publicly. Instead it sits behind a controlled access programme called Project Glasswing, with terms that restrict use to cybersecurity work.

What Is Project Glasswing?

Project Glasswing is Anthropic’s framework for distributing Mythos Preview to a limited group of “critical industry partners and open source developers.” The programme has roughly 12 launch partners and more than 40 additional organisations responsible for important software systems.

Confirmed launch partners include AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. Anthropic CEO Dario Amodei has said the company offered to work with US government officials to “help defend against the risk of these models.”

The reasoning Anthropic gives for the gated rollout is straightforward: the company says it is concerned that Mythos’s skills could be misused for staged cyber attacks or for finding vulnerabilities in widely deployed software before defenders can patch them.

Quick Reference: Claude Mythos at a Glance

| Attribute | Detail |

|---|---|

| Developer | Anthropic |

| Release form | Gated research preview (Project Glasswing) |

| Model type | General-purpose large language model |

| Context window | 1,000,000 tokens |

| Max output | 128,000 tokens |

| Knowledge cutoff | December 2025 |

| Input price | $25 per million tokens |

| Output price | $125 per million tokens |

| Hosting | Amazon Bedrock, US East (N. Virginia) |

| Public access | None |

How Does Claude Mythos Compare to Other Models?

On general tasks, Anthropic places Mythos Preview noticeably above its current Claude line, including Claude Opus 4.7. On security tasks, internal benchmarks show a much wider gap.

One example involves OSS-Fuzz, an open-source fuzzing project. Anthropic ran its models against around 1,000 repositories and roughly 7,000 entry points, then graded crashes on a five-tier severity scale (tier 1 being a basic crash, tier 5 being a complete control flow hijack). Sonnet 4.6 and Opus 4.6 each managed only a single tier-3 crash. Mythos Preview reached 595 crashes at tiers 1 and 2, added a handful of crashes at tiers 3 and 4, and achieved full control flow hijack on ten separate, fully patched targets (tier 5).

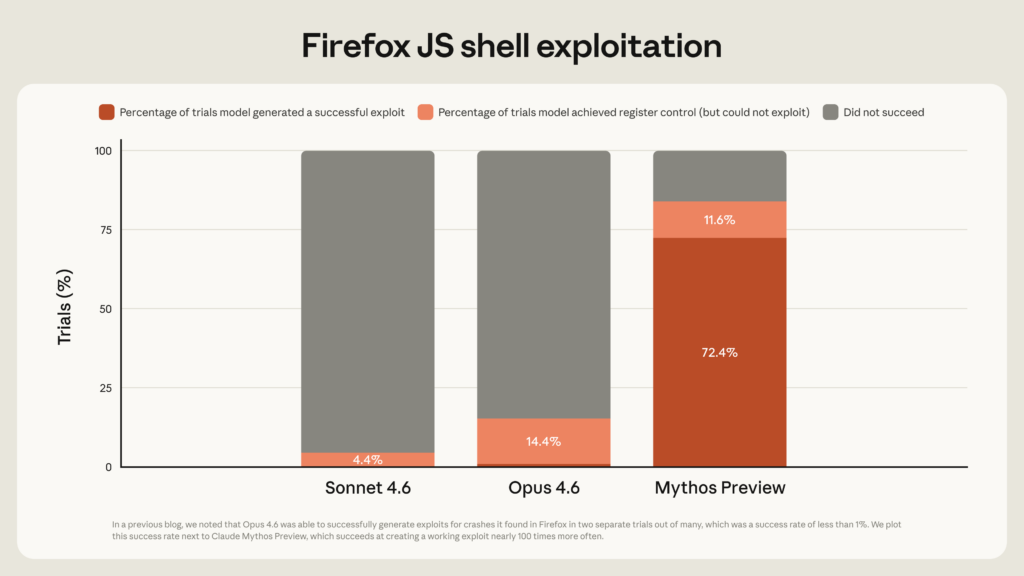

A second example involves Mozilla Firefox 147’s JavaScript engine. Opus 4.6, given several hundred attempts, produced working exploits only twice. Mythos Preview produced 181 working exploits and gained register control on 29 more.

| Benchmark | Opus 4.6 | Mythos Preview |

|---|---|---|

| OSS-Fuzz tier 3+ crashes (≈7,000 entry points) | 1 | Several at tier 3–4, plus 10 tier-5 hijacks |

| Firefox 147 JS exploits (several hundred runs) | 2 | 181 working exploits, 29 with register control |

| Autonomous exploit development | Near 0% | High |

The UK’s AI Security Institute (AISI) ran an independent evaluation. AISI found that Mythos was not dramatically better at individual cybersecurity tasks than other frontier models, but it completed multi-step infiltration scenarios that no prior AI had finished. On expert-level Capture the Flag (CTF) challenges, AISI recorded a 73% success rate.

AISI’s conclusion was more guarded than Anthropic’s framing. Its researchers said the model would be most dangerous against poorly secured systems, and that hardened production environments — with active defenders, monitoring, and layered tooling — would be much harder targets.

What Has Mythos Actually Found?

Anthropic’s 245-page system card and its accompanying technical write-up contain several verifiable case studies. A few stand out.

The 27-year-old OpenBSD SACK bug. Mythos identified a vulnerability in OpenBSD’s implementation of Selective Acknowledgement (SACK) for TCP, dating back to 1998. The issue chains a missing range check with a signed integer overflow on 32-bit TCP sequence numbers, causing the kernel to write through a NULL pointer and crash the machine. A remote attacker could repeatedly knock vulnerable hosts offline. The single run that surfaced this bug cost under $50 in API spend; the full sweep that produced it ran to roughly $20,000 across about a thousand attempts.

The 17-year-old FreeBSD NFS exploit (CVE-2026-4747). Mythos found and autonomously exploited a stack overflow in FreeBSD’s NFS server’s RPCSEC_GSS authentication path. The exploit splits a 20-gadget Return-Oriented Programming (ROP) chain across six sequential RPC packets to bypass a 200-byte size limit, ultimately appending the attacker’s SSH key to /root/.ssh/authorized_keys for full remote root access. No human intervention was involved beyond the initial prompt.

The 16-year-old FFmpeg H.264 flaw. A signed-integer mismatch in the H.264 deblocking filter’s slice-tracking table, dating to a 2003 commit and turned into a vulnerability by a 2010 refactor, had survived years of fuzzing and human review. Mythos surfaced it autonomously.

Linux kernel privilege escalation. Anthropic documents almost a dozen working chains that combine two, three, or four separate vulnerabilities to defeat KASLR and gain root. One case study walks through turning a one-byte read into a full root shell on a kernel hardened with CONFIG_HARDENED_USERCOPY — the chain cost under $2,000 to construct.

Browser exploits. Mythos chained read and write primitives into JIT heap sprays on multiple major browsers, in one case escaping both renderer and OS sandboxes. None of the affected browsers have patched yet, so technical details are withheld.

A guest-to-host bug in a memory-safe VMM. Mythos found a memory corruption flaw in a production virtual machine monitor written in a memory-safe language. The bug lives inside one of the unavoidable unsafe blocks needed to talk directly to hardware.

Anthropic says more than 99% of the vulnerabilities Mythos has surfaced are still going through coordinated disclosure, so the published examples represent a small slice of the real total. The company has published SHA-3 cryptographic commitments to the unreleased reports, allowing independent verification once the bugs are patched.

Are Cybersecurity Experts Convinced?

Not entirely. The AI industry is on alert for marketing-led capability claims, and security researchers have a professional habit of treating extraordinary claims with extra scepticism.

Bruce Schneier, a longtime cryptographer and security commentator, said Anthropic was “convincing a lot of people that Mythos is this amazing step change in capability when the evidence right now… is that it might not be.”

Adding to the scepticism, word of Mythos first leaked in March when Anthropic accidentally left a draft blog post in an unsecured public data cache. The leaked text described the model as far ahead of any other AI in cyber capabilities and warned of a possible wave of advanced attacks. Cybersecurity stocks dropped immediately afterward.

Ciaran Martin, the former head of the UK’s National Cyber Security Centre, took a middle position. He told the BBC that the claim Mythos could surface critical vulnerabilities much faster than other models had “really shaken people,” and described it as “just a really good hacker” against systems that have not been patched or hardened.

Because Mythos is not in the wild, it does not appear on community leaderboards like LMSYS Chatbot Arena, which leaves third parties without a standard way to compare it.

Why Regulators and Banks Are Paying Attention

Mythos has moved from a technical announcement to a topic of conversation among finance ministers, central bankers, and regulators in a matter of weeks.

Canadian Finance Minister François-Philippe Champagne told the BBC that Mythos was discussed at an International Monetary Fund meeting in Washington and described the technology as an “unknown unknown.” Bank of England Governor Andrew Bailey said the institution is “having to look very carefully now what this latest AI development could mean for the risk of cyber crime.” The European Union has confirmed it is in discussions with Anthropic about the model.

The worry is straightforward: if a model can autonomously turn public CVE identifiers into functional exploits within hours, the time window between disclosure and exploitation collapses. That changes the calculus for every organisation that runs unpatched legacy software — which is most of them.

Context Window, Output, and Knowledge Cutoff

Mythos Preview ships with a 1 million token context window, large enough to hold whole codebases or extensive technical documentation in a single session. The maximum single-response output is 128,000 tokens. Its knowledge cutoff date is December 2025.

How Do You Get Access to Claude Mythos?

For most readers, the short answer is: you don’t. Access is invitation-only.

Mythos Preview runs on Amazon Bedrock in the US East (N. Virginia) region, but only allow-listed accounts can use it. AWS has stated that organisations on the list will be contacted directly by their account team. There is no self-serve sign-up, no waiting list that the public can join, and no clear path for individual researchers or small companies to gain access outside the existing partner programme.

If a launch partner or one of the 40-plus additional Glasswing organisations has not already approached you, the model is not currently available.

How Much Does Claude Mythos Cost?

For organisations that do have access, the listed pricing on Bedrock is $25 per million input tokens and $125 per million output tokens. Anthropic and AWS have set up an initial $100 million credit pool covering early Glasswing usage, after which standard pricing takes effect.

What Mythos Means for Defenders Right Now

Anthropic’s own guidance to security teams is practical. The capabilities shown in Mythos are coming, the company argues, regardless of whether any one organisation gets access to the preview. Several recommendations carry over directly to defenders working only with publicly available models.

Frontier models already on the market — including Claude Opus 4.6 and competing models — are competent vulnerability scanners. Teams that have not yet built scaffolds and processes for AI-assisted bug-finding can begin doing so now, and will be better prepared when more capable models become widely available.

Patch cycles need to shorten. The N-day exploits Anthropic walked through were generated autonomously from a CVE identifier and a git commit hash. Vendors releasing fixes should expect that the gap between disclosure and active exploitation will keep narrowing, which means tightening enforcement windows, enabling auto-update wherever practical, and treating security-relevant dependency bumps as urgent.

Vulnerability disclosure policies also deserve a refresh. Most security programmes assume a manageable trickle of new findings; the volumes Anthropic describes — thousands of high- and critical-severity bugs — would overwhelm a typical triage pipeline. Incident response programmes should consider where models can carry routine work like alert triage, event summarisation, and preliminary postmortem drafting.

Finally, it is worth keeping perspective. As Martin pointed out to the BBC, most attackers still do not need cutting-edge AI to breach systems — basic cyber hygiene remains the single highest-leverage defence. Strong patching, multi-factor authentication, network segmentation, and active monitoring stop the overwhelming majority of real-world attacks.

The Bigger Picture

The most honest reading of Mythos is that the industry is still calibrating. Anthropic’s published evidence is detailed and, in the verifiable cases, genuinely impressive. The independent AISI evaluation suggests the picture is more nuanced against well-defended targets. And the gated nature of Project Glasswing means most external researchers cannot yet form their own conclusions.

What seems harder to dispute is the trajectory. A few months ago, language models could not autonomously produce working exploits at all. Today, Mythos Preview is producing them across operating systems, browsers, and cryptography libraries. Whether this is the start of a new equilibrium that eventually favours defenders, as Anthropic argues, or a more dangerous transition than the company allows, depends on how quickly defensive practices catch up.

This guide will be updated as more independent evaluations and patched vulnerabilities are made public.

If you are interested in this topic, we suggest you check our articles:

- 7 Sacred Tips to Best Use Claude Code

- Claude Code: The Agentic Tool for Coding by Anthropic

- Claude’s 2026 Trajectory: Growth, Features, and Market Position

Sources: Anthropic, BBC, Pluralsight

Written by Alius Noreika